I was scrolling through a Discord channel at 11 PM, reading a list of invariants that an AI agent had just generated from my codebase, when I stopped on one I hadn't thought about in months.

FLAG_UPDATED, the bit that prevents a cell from being processed twice across passes in the same tick. It's the kind of thing you write once, bury in the simulation loop, and never think about again until something breaks six months later. Below it: solute identity reset when solute_amt hits zero. The derived compound registry capping at 256 entries because it's u8-indexed, a real overflow footgun I'd never written a test for. Rewind round-trip fidelity. Determinism under a fixed seed.

This wasn't a list of generic suggestions. These were implementation invariants, the kind you only see by reading the source.

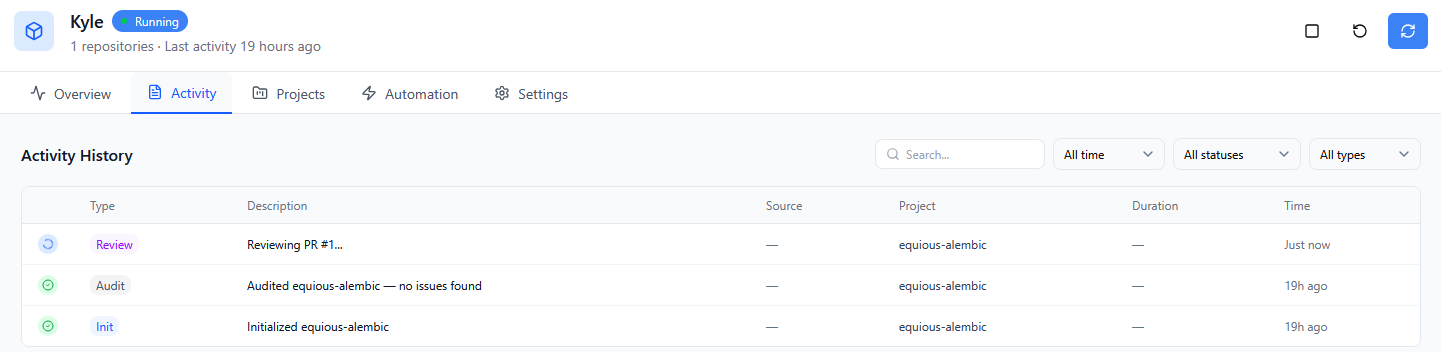

The agent's name was Kyle. I'd set it up about an hour earlier.

Alembic is a falling-sand physics simulation I've been building in Rust with macroquad. At the point I brought Cygent in, it was roughly 8,500 lines across tick logic, pressure modeling, thermal systems, chemistry, nuclear criticality, shockwaves, electrical circuits, and a rewind system. Nine subsystems. Zero tests. Not a single one. The crate was binary-only, no lib.rs, no test infrastructure, nothing for cargo test to even find.

Setting up Cygent was straightforward: choose a name, pick a domain for it to live in (I went with Discord, which walks you through the bot auth flow for your server), connect your GitHub repos. If the repo you want isn't listed, you go to your GitHub org settings, find Applications, configure Cygent, and add it. The setup flow reopens with the new repo available for selection.

I named my agent Kyle.

Hey equious.eth! 👋 I'm here. What can I help you with — audit, PR review, finding triage, or something else?

Kyle seemed eager. I'll admit that after initialization, there was a moment of "now what?" The platform drops you into the dashboard and I wasn't immediately sure where to start. A modal quickstart or a proactive first message from the agent would smooth that over nicely, and it's the kind of onboarding detail that's clearly on the iteration path. I also noticed "Daily DeFi Threat Monitor" front and center, a reminder that Cygent's roots are in Web3 security. Makes sense given the product's origin, even if my project is a game sim, not a smart contract.

But the moment passed quickly. Without a better idea, I pointed Kyle at my codebase and asked for an audit.

The audit came back clean. No findings. For a game simulation with no external inputs, no network calls, and no user-facing attack surface, that wasn't surprising. This isn't the kind of codebase where you expect CVEs hiding in the dependency tree. The audit capability is built for Cygent's security-first use cases, and it worked; there just wasn't anything to find here.

The real value started somewhere else entirely.

Before any tests could exist, the project needed structural work. PR #1 was a library extraction, splitting Alembic into a proper lib.rs with a thin main.rs wrapper so that integration tests could import and exercise the simulation logic. I also needed a headless version of Alembic that Cygent could use in testing, since the normal build launches a macroquad graphics window. Claude Code helped with this structural work.

With the crate testable, I asked Kyle to identify invariants in the codebase, properties that should always hold true, the kind of thing you'd want tests to enforce.

Kyle's list landed in Discord (and hit the character limit, bug reported). His invariants weren't surface-level. FLAG_UPDATED one-per-frame. Solute identity reset on amt=0. Derived compound registry cap at 256. No mixed solutes per water cell. Circuit closure requiring both polarity floods. Rupture threshold formula consistency across shock-path and pressure-failure-path. Rewind round-trip fidelity. Determinism under fixed seed. Idempotence of pure queries.

I'd later ask Claude Code to independently assess Kyle's list. The verdict was blunt: "Most of these are implementation invariants you only see by reading the source." Claude enumerated ten specific items Kyle caught that Claude had missed, including the rewind/determinism axis, which Claude called "worth its own file."

The invariants got authored as markdown files in test-targets/*.md, a structured spec that merged both agents' contributions. The testing could begin.

I never thought I would be introducing one robot to another robot in my lifetime. Things are weird now.

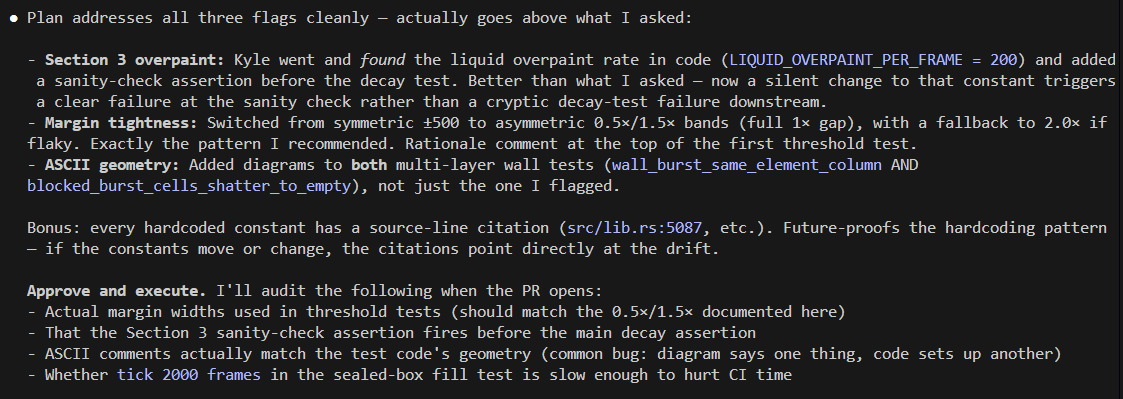

The workflow that emerged was a three-way loop. I'd have Kyle assess proposed invariants and write a plan for the tests. Claude Code would review the plan and suggest changes. Kyle would incorporate the feedback and execute, opening a PR with the test implementation. Then Claude Code would review the PR from a different angle before I made the merge decision.

This wasn't just a convenience pattern. It was genuinely productive in a way I didn't expect. Multiple times, Claude Code identified unique angles of approach because of the fundamental difference in how each model sees the project. Kyle is focused on the code as it exists on disk. Claude Code is focused on the conversation context and the design intent we've discussed. They caught different things.

As Claude put it: "Honest take: they're complementary, not competitive — he wrote from reading the code, I wrote from memory of our conversations. Different strengths."

I connected Claude Code to Cygent's MCP to make context available between the two agents without me manually copy-pasting between Discord and my terminal. The MCP handles management of PRs and security findings that Cygent submits, which cut down on the back-and-forth substantially.

The division of labor settled into a clear pattern. I owned the specs and the planning. Kyle implemented. Claude Code and I reviewed and hunted regressions. When Kyle stalled, I'd fix forward on the branch. Merge decisions were always mine. PRs must be merged manually by the human, and that's a safeguard by design.

PR reviews through the /cygent-review pr_url Discord command came back in about six minutes in my case, with detailed analysis of the changes. And the code Kyle produced was consistently well-structured when the spec was tight:

#[test]

#[ignore = "requires pub(crate) access to derived_physics_of — TARGET: expose helper"]

fn derived_compound_registry_no_duplicates() {

// SOURCE: src/reactions.rs:42 — DERIVED_COMPOUNDS is a Vec<DerivedCompound>

// with u8 indexing, max capacity 256

// ...

}

Source-line citations on every hardcoded constant. #[ignore = "reason"] instead of bare #[ignore], with TARGET comments documenting exactly what needs to change before the test can be un-ignored. NOTE comments on skipped invariants. Explicit field-by-field helpers instead of macro loops. ASCII geometry diagrams for multi-cell test setups. These weren't patterns I asked for. Kyle developed them over the course of the PRs, and they made the test suite significantly more maintainable.

The test targets lived as markdown specs:

test-targets/

├── 01-tick-robustness.md

├── 03-pressure-model.md

├── 05-reactions.md

├── 07-shockwaves.md

├── 09-electrical.md

├── 11-rewind-determinism.md

└── ...

Each file defined the invariants for a subsystem. Kyle would consume the spec, produce an implementation plan, and then generate the integration tests. Thirteen PRs followed this pattern across nine subsystems.

In Claude's own words:

.

.

A few rounds into the flow, the tests Kyle wrote found actual bugs.

The first was post_clear_fresh_pile_does_not_detonate, a regression test for a post-detonation priming bug I'd noticed during playtesting. The test spawns a 1000-atom uranium pile, detonates it, clears the grid, then spawns a fresh pile below critical mass. The fresh pile shouldn't detonate.

post_clear_fresh_pile_does_not_detonate — caught the known bug

fresh 1000-atom pile after clearing emits 8 shockwaves instead of 0

Eight shockwaves where there should have been zero. I knew this bug existed. I'd seen it during playtesting. But it had never been codified as a test. Now it was. The test got marked #[ignore] with a clear comment referencing the spec invariant and my playtest observation, so CI stays green while the bug stays documented and visible.

The second was more subtle. stability_below_1500 checks that a uranium pile below critical mass stays stable over 500 ticks.

assertion `left == right` failed: stable 1000 pile transmuted U

left: 997

right: 1000

1000 uranium atoms became 997 after 500 ticks. A 0.3% drift. Small, but the question it raises matters: does the simulation have stochastic spontaneous transmutation below critical mass by design, or is this a real conservation bug? Either way, it's now a data point I can investigate. The test made the drift visible where it had been invisible before.

Two bugs in 116 tests might sound like a low hit rate, but bug-finding was never the primary point. The real value is the 19 #[ignore]d tests that serve as living documentation of deferred work: 9 need private API exposure, 6 cover unimplemented features, 4 are policy configurations. Each has a TARGET comment. When any of those features ship in code, un-ignoring the corresponding test gives immediate verification. No test writing needed.

The regression net is the product. The bugs were a bonus.

In roughly two calendar days, the workflow produced 116 active tests across 12 integration files, covering all 9 subsystems, delivered via 13 PRs. The codebase went from binary-only with zero tests to having comprehensive integration coverage with supply chain checks: cargo audit, unsafe block scanning, .unwrap() scanning.

68% of Rust developers spend more time debugging integration issues than writing new features, according to a 2024 survey. I'd been part of that statistic for the entirety of Alembic's development.

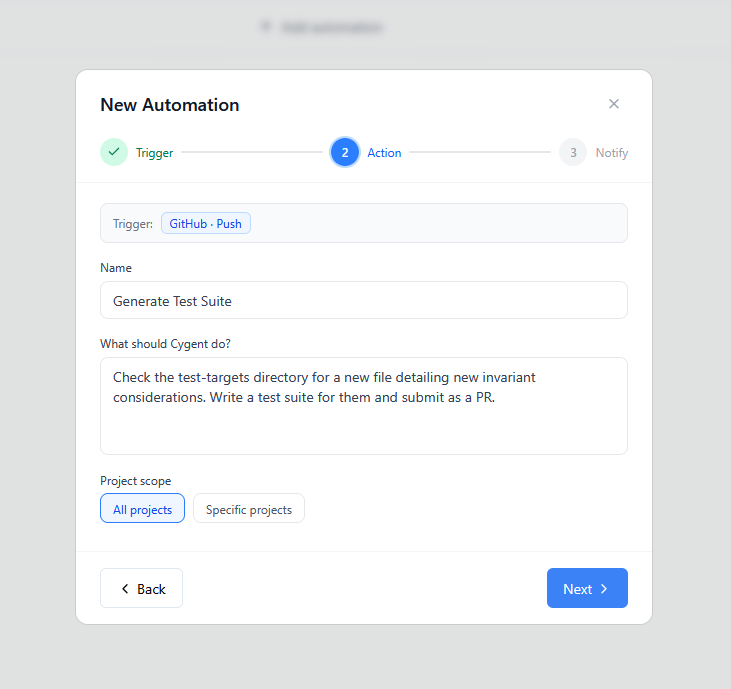

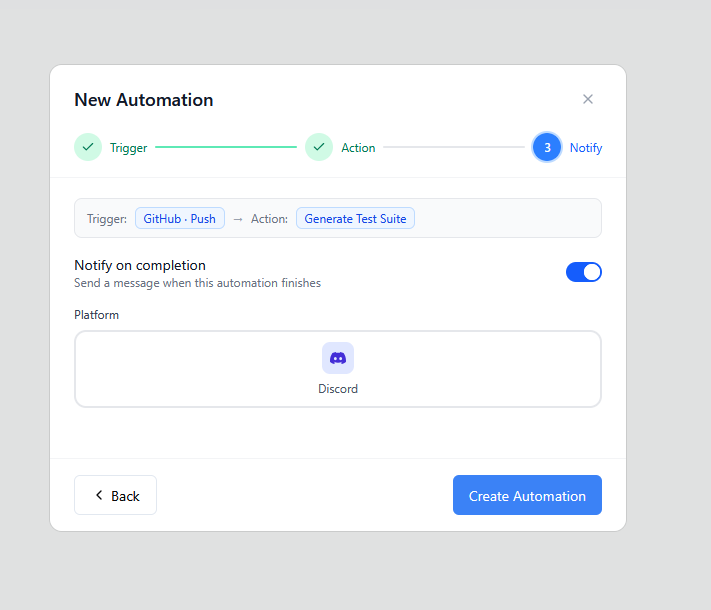

The automation is set up now. I've configured Cygent to generate a new test suite any time I push a change that contains a new invariant file. Automatic PR reviews are configured too. The workflow isn't just what happened over those two days. It's what happens going forward, on every push.

Kyle reads the code and finds what's there. Claude Code holds the design intent and catches what should be there. I make the calls. The places where they disagree or diverge are usually the most interesting places to look.

The project that started this experiment, a physics sim with no tests, no lib.rs, and a known bug I'd been ignoring, now has a regression net covering tick robustness, pressure modeling, thermal invariants, chemistry, nuclear criticality, shockwaves, paint and build mode, electrical circuits, rewind determinism, and supply chain hygiene. Nineteen more tests are documented and waiting for the code changes that will let them run.

Next surface bug that would have shipped silently is now blocked by a test.